For the past year, the AI assistant race on iPhones has had only one official challenger: OpenAI’s ChatGPT. When Apple unveiled its partnership with OpenAI at WWDC 2024, it was the clearest signal that the world’s most valuable company had chosen a single AI horse to bet on. That era may be ending sooner than anyone expected.

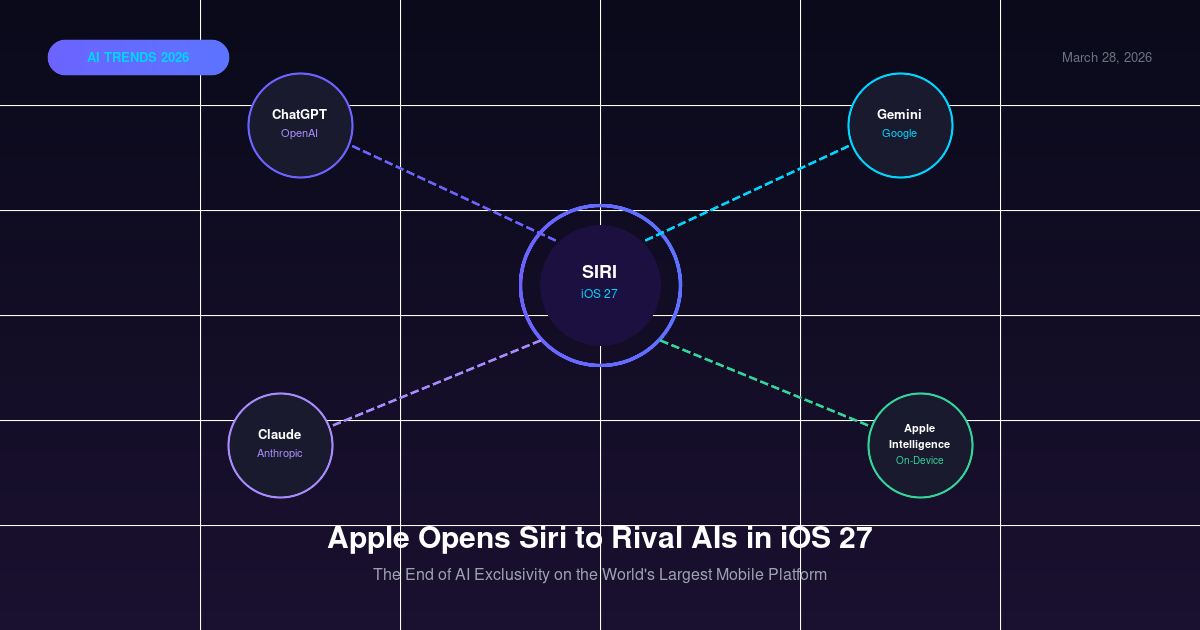

According to a March 26, 2026 report by Bloomberg, Apple is planning a fundamental shift in how Siri works. In iOS 27, iPadOS 27, and macOS 27, Siri is set to transform from a single-model assistant into a multi-model routing layer — a gateway that can direct user queries to Google Gemini, Anthropic Claude, OpenAI ChatGPT, or any other AI service that builds an approved Extension.

This is not a minor update. It represents a strategic acknowledgment from Apple that no single AI model can dominate every use case, and that its 2.2 billion active devices are more valuable as a distribution platform than as a captive user base for one AI partner. For the first time, the most privacy-conscious device maker on the planet is choosing to commoditize the AI layer rather than control it.

The implications ripple far beyond Apple’s ecosystem. If the largest mobile platform defaults to a “bring your own AI” model, every other device maker, operating system, and app platform will face pressure to follow. The AI assistant wars are not over — they are just entering their most competitive phase, where distribution, trust, and user experience matter more than model benchmarks.

This article breaks down what we know about Apple’s iOS 27 AI strategy, why the shift is happening now, and what it means for the future of AI competition.

What Is Apple Planning for Siri in iOS 27?

Apple plans to introduce a Siri Extensions system in iOS 27 that allows third-party AI applications downloaded from the App Store — such as Google Gemini and Anthropic Claude — to integrate directly with Siri. Users will be able to select their preferred AI service inside Settings → Apple Intelligence & Siri → Extensions, and Siri will route queries to the selected model, returning responses as if Siri itself had answered.

| Feature | iOS 26 (Current) | iOS 27 (Planned) |

|---|---|---|

| Supported AI models | ChatGPT (exclusive) | ChatGPT, Gemini, Claude, and more |

| Selection mechanism | Off/On toggle for ChatGPT | Per-model selection in Extensions |

| Routing architecture | Single handoff to ChatGPT | Multi-model gateway |

| Monetization | App Store commissions on ChatGPT | Commissions on all AI subscriptions |

| WWDC preview expected | — | June 2026 |

The Extensions framework is inspired by how Siri already integrates with third-party apps for tasks like ride booking and messaging. The AI version would be considerably deeper: AI models can potentially access Siri’s on-device context, such as calendar events, reminders, and recent conversations, when the user grants permission.

Why Did Apple Shift Away From a Single AI Partner?

Apple’s original ChatGPT deal served a specific purpose: close the gap with Google Assistant and Samsung’s AI features quickly, without the multi-year R&D required to build a competitive large language model in-house. It worked as a stopgap. But the AI landscape in 2026 looks very different from 2024.

First, Apple Intelligence underperformed expectations. The suite of on-device AI features Apple shipped in iOS 18 was solid for privacy-sensitive tasks but lagged behind Gemini Ultra and Claude 3.7 Sonnet on complex reasoning and creative tasks. Users began bypassing Siri entirely for demanding queries.

Second, the competitive pressure from Android intensified. Google’s Pixel 10 ships with Gemini natively integrated at the OS level, and Samsung’s Galaxy S26 offers a choice between Gemini and its own Galaxy AI. A single ChatGPT integration was no longer a differentiator; it had become a limitation.

Third, the economics of AI subscriptions favor openness. Apple earns a 15–30% commission on every App Store subscription. By routing more users toward paid AI tiers — whether Gemini Advanced, Claude Pro, or ChatGPT Plus — Apple profits from the entire AI subscription economy rather than a single partnership deal.

flowchart TD

A[User Query via Siri] --> B{Siri Extension Router}

B --> C[Apple Intelligence<br>On-Device]

B --> D[ChatGPT<br>OpenAI]

B --> E[Gemini<br>Google]

B --> F[Claude<br>Anthropic]

C --> G[Response to User]

D --> G

E --> G

F --> GHow Does the Siri Extension Architecture Work?

The Siri Extension system uses a standardized API that AI providers implement to handle requests forwarded by Siri. When a user’s query exceeds what Apple Intelligence can handle locally — or when the user has selected a specific third-party AI — Siri packages the query with contextual metadata and sends it to the chosen model’s cloud endpoint.

The response is returned to Siri, which surfaces it in the same conversational UI, making the underlying model largely invisible to the end user. This maintains Apple’s interface consistency while giving users access to the capabilities of best-in-class external models.

| Component | Role |

|---|---|

| Apple Intelligence on-device | Privacy-sensitive tasks, offline queries, system actions |

| Siri Extension Router | Query classification and model selection |

| Extension API | Standardized interface for third-party AI models |

| Settings UI | User-facing model selection and permissions |

| App Store | Distribution and subscription billing for AI apps |

The architecture closely resembles how browsers today handle search engine preferences — Apple becomes the browser, AI models become the search engines, and the Extension API is the standard interface between them.

Which AI Assistants Are Expected in iOS 27?

Based on Bloomberg’s reporting and the current state of AI partnerships in the Apple ecosystem, the following are the most likely initial Extension partners:

| AI Assistant | Provider | Current iOS Status | iOS 27 Expected |

|---|---|---|---|

| ChatGPT | OpenAI | Deep integration (exclusive) | Continues, loses exclusivity |

| Gemini | App only, no Siri integration | Full Siri Extension | |

| Claude | Anthropic | App only, no Siri integration | Full Siri Extension |

| Copilot | Microsoft | App only | Possible Extension |

| Perplexity | Perplexity AI | App only | Possible Extension |

Apple has not confirmed which providers have signed Extension agreements. The June 2026 WWDC announcement is expected to reveal the initial partner roster.

What Does This Mean for the AI Competitive Landscape?

Apple’s move accelerates a winner-takes-most dynamic in the AI assistant market — but with an unexpected twist. The “winner” is no longer the model that controls the most devices. It is the model that delivers the best experience per query, because users can now switch models with a single settings toggle.

This is fundamentally a quality competition, not a distribution competition. OpenAI spent years optimizing for distribution; the iOS 27 Extension system effectively neutralizes that advantage by making every serious AI model equally accessible on 2.2 billion devices.

graph LR

A[Pre-iOS 27<br>Distribution Lock-in] -->|iOS 27 disrupts| B[Post-iOS 27<br>Quality Competition]

B --> C[Per-task model selection]

B --> D[Subscription tier competition]

B --> E[Context integration depth]

B --> F[Privacy trust differentiation]The winners in this new environment will be AI providers that:

- Offer compelling subscription tiers that justify App Store upgrades

- Invest in deep contextual integration — reading your calendar, files, and preferences

- Build user trust on privacy, especially given Siri’s access to sensitive device context

- Achieve measurable performance leads on the tasks iPhone users most commonly delegate to AI

For Google, this is a long-awaited opening. Gemini has been effectively locked out of the primary AI interaction surface on iOS. For Anthropic, it represents a major distribution milestone — Claude gaining a native Siri entry point on over one billion iPhones.

How Will OpenAI and ChatGPT Be Affected?

OpenAI’s position is the most complex. On one hand, ChatGPT retains its integration and the significant brand recognition it has built among iOS users. On the other hand, it loses the exclusive “default” status that made it the unchallenged first choice for Siri handoffs.

The historical parallel is instructive: when Google agreed to pay Apple $18 billion per year to be the default Safari search engine, the deal guaranteed Google’s dominance. OpenAI’s original Siri deal had a similar logic. With Extensions, Apple is effectively ending that logic for AI — no single provider will own the default slot.

OpenAI’s best defensive move is to ensure ChatGPT’s Extension integration is the deepest and most polished at launch, combining the iOS 27 API surface with its existing advantage in creative writing, coding assistance, and multimodal understanding.

What Are the Risks and Open Questions?

Despite the strategic logic, Apple’s multi-AI approach carries real risks.

Fragmented user experience. When different users have different AI defaults, developers cannot assume a consistent capability baseline for Siri integrations. App developers building Siri Shortcut workflows may face compatibility headaches.

Privacy and data handling complexity. Routing a user query through a third-party AI means that query leaves Apple’s infrastructure. Apple will need to publish clear disclosure requirements for Extension providers — and users will need to understand which AI sees which data.

Regulatory scrutiny. A gatekeeper that controls which AI models gain access to 2.2 billion devices, and takes a commission on every subscription, will attract antitrust attention. The EU’s Digital Markets Act already requires Apple to allow alternative app stores; the AI Extension framework may face similar regulatory review.

These risks are manageable, but they reinforce a broader truth: the opening of the iOS AI layer is a beginning, not a resolution. The most consequential competition in AI is no longer about building the best model — it is about who earns the user’s trust to be their default intelligence layer.

FAQ

What AI assistants will Siri support in iOS 27? iOS 27 is expected to support Google Gemini, Anthropic Claude, and OpenAI ChatGPT via a new Extensions system. Users select their preferred assistant in Settings under Apple Intelligence and Siri, and Siri routes requests to the chosen model and returns responses.

Does iOS 27 remove ChatGPT from Siri? No. ChatGPT remains available but loses its exclusive status. The iOS 27 Extensions system adds Gemini, Claude, and potentially other assistants alongside ChatGPT, letting users choose their preferred AI rather than relying on a single provider.

When will iOS 27 be released with the new Siri features? Apple has not confirmed a release date. Industry analysts expect the iOS 27 feature set to be previewed at Apple’s Worldwide Developers Conference in June 2026, with a public release likely in September 2026 alongside new iPhone hardware.

How does Apple plan to make money from third-party AI in Siri? Apple earns a commission — typically 15 to 30 percent — on in-app subscriptions purchased through the App Store. Opening Siri to Gemini, Claude, and others drives more paid AI subscriptions through the iOS App Store, creating a new revenue stream from the AI subscription economy.

What does the iOS 27 AI strategy mean for the AI industry? It signals that proprietary single-model AI assistants are losing ground to open, multi-model ecosystems. Device makers are becoming neutral AI distribution platforms rather than betting on one model. This accelerates competition among AI providers and rewards those with the best user experience and deepest device integrations.

Will the iOS 27 Siri extensions work offline or only in the cloud? Third-party AI integrations rely on cloud inference, so an internet connection is required when routing requests to Gemini, Claude, or ChatGPT. Apple’s on-device Apple Intelligence models continue to run locally for privacy-sensitive tasks even without connectivity.