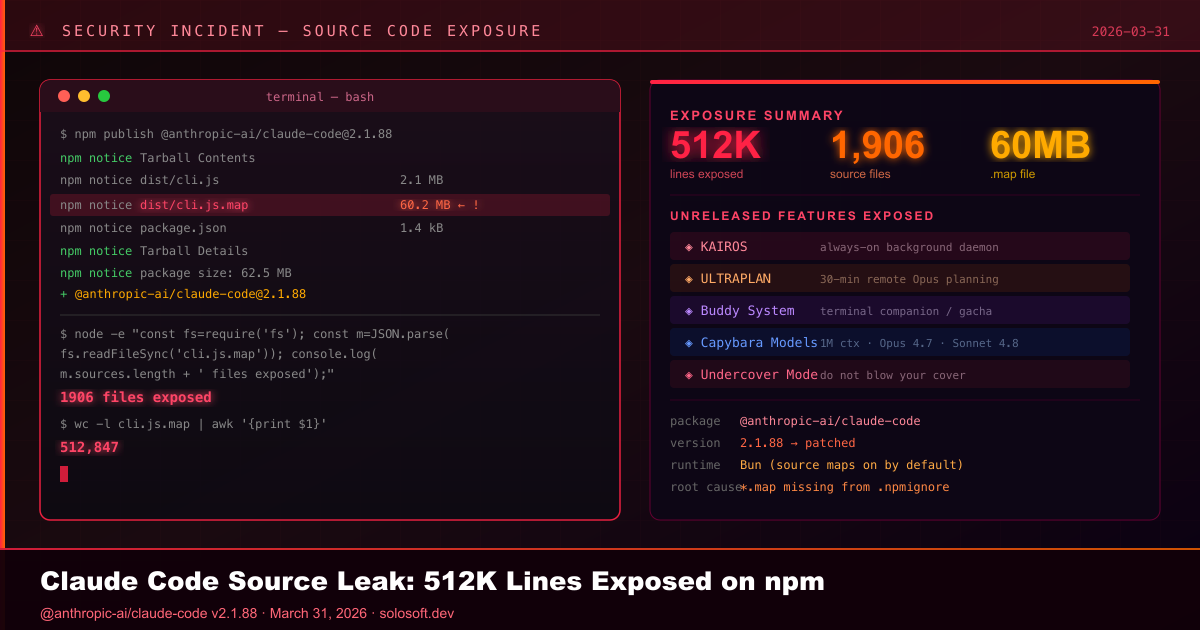

On March 31, 2026, a routine npm release turned into one of the most revealing accidental exposures in AI tooling history. Researchers discovered that version 2.1.88 of Anthropic’s @anthropic-ai/claude-code package included an unintended artifact: a 60 MB JavaScript source map file named cli.js.map. Inside that single JSON file, embedded as strings, sat 512,000 lines of original, unobfuscated TypeScript source code across 1,906 proprietary files.

Source map files are debugging tools. They are built to help engineers translate minified production code back into readable format when diagnosing crashes. They are never meant for public distribution. When Anthropic’s engineers built Claude Code using the Bun runtime — which generates source maps by default — no one added *.map to the project’s .npmignore configuration. The result was that npm happily served the entire codebase to anyone who installed the package or browsed its contents.

The irony was immediate and total. Inside the leaked source sat a complete internal system called “Undercover Mode” — a dedicated subsystem built specifically to prevent Claude Code from accidentally leaking Anthropic’s internal information into public repositories. The engineers had built a sophisticated tool to stop AI from blowing its cover, and then shipped the entire source in a packaging artifact, apparently by Claude itself.

Anthropic confirmed the incident, describing it as “a release packaging issue caused by human error, not a security breach,” and stated it was rolling out measures to prevent recurrence. No user data, customer prompts, or model weights were involved. But the intellectual property exposure — including internal orchestration logic, system prompt architecture, emotion telemetry, unreleased features, and future model codenames — was already propagating across mirrors before the package was pulled.

What Happened in the Claude Code npm Leak?

Answer Capsule: On March 31, 2026, Anthropic accidentally included a 60 MB source map file in npm package @anthropic-ai/claude-code v2.1.88. The file contained 512,000 lines of unobfuscated TypeScript source across 1,906 files. No user data was exposed, but the full internal architecture — including unreleased features, system prompt logic, and upcoming model codenames — became publicly accessible to anyone who downloaded the package.

The disclosure was first surfaced publicly by Chaofan Shou, a researcher at blockchain security firm Fuzzland, who posted to X (formerly Twitter) that the package contained a complete source map. Within hours the code was forked across GitHub, making containment effectively impossible. Anthropic acknowledged the incident and stated that the package was being patched, but the leaked material had already propagated to external mirrors.

| Item | Detail |

|---|---|

| Package affected | @anthropic-ai/claude-code v2.1.88 |

| Leaked file | cli.js.map (~60 MB) |

| Lines of code | 512,000+ lines of TypeScript |

| Files exposed | 1,906 source files |

| Discovery date | March 31, 2026 |

| Data exposed | Source code, system prompts, feature flags, model codenames |

| Data NOT exposed | User data, customer prompts, model weights, API keys |

| Anthropic’s classification | “Human error, not a security breach” |

How Did a .map File Expose 512,000 Lines of TypeScript?

Answer Capsule: Claude Code is built with Bun, which generates JavaScript source map (.map) files by default. Source maps contain the original source code embedded as strings in JSON — their job is to help engineers debug minified production code. When Anthropic published v2.1.88, no one had added *.map to .npmignore, so the 60 MB file went live on npm with the entire unobfuscated codebase inside.

This is not a novel attack vector. Developers have been accidentally shipping source maps to npm for years. What made this incident notable was the scale — 512,000 lines of proprietary AI agent code — and the content.

flowchart TD

A[Bun bundler generates cli.js.map] --> B[Source map contains original TypeScript embedded as JSON strings]

B --> C[Engineer runs npm publish]

C --> D{.npmignore excludes .map files?}

D -->|No - missing exclusion| E[cli.js.map included in published package]

D -->|Yes - correct config| F[Only cli.js shipped — source protected]

E --> G[v2.1.88 goes live on npm registry]

G --> H[Researcher downloads package and extracts .map]

H --> I[512K lines of TypeScript readable by anyone]

I --> J[Code mirrored on GitHub before patch]The root cause was simple: Bun’s bundler outputs source maps with sourcesContent arrays that literally contain every file’s original code as a string. Unlike traditional obfuscation, where reverse engineering requires effort, source maps hand over the original code directly. The fix is a single line — *.map in .npmignore — or disabling source map generation for production builds.

The funniest detail: the leaked source contained a complete Undercover Mode subsystem specifically engineered to prevent internal Anthropic information from appearing in public repositories. The system works. It just did not cover npm packaging pipelines.

What Unreleased Features Did the Leak Reveal?

Answer Capsule: The leaked source contained multiple features gated behind compile-time flags absent from public builds. The most significant are KAIROS (an always-on proactive background daemon), ULTRAPLAN (a 30-minute remote planning session using Opus 4.6), and Buddy (a complete Tamagotchi-style terminal companion with deterministic gacha mechanics). References to Capybara models, Opus 4.7, and Sonnet 4.8 were also found.

| Feature | Description | Status |

|---|---|---|

| KAIROS Mode | Always-on background daemon monitoring workflows, logging observations, acting proactively with 15-second blocking budget | Gated — PROACTIVE compile flag |

| ULTRAPLAN | Offloads complex planning to remote Cloud Container Runtime running Opus 4.6 for up to 30 minutes; result approved via browser | Gated — tengu_ultraplan config |

| Buddy System | Full Tamagotchi companion with deterministic gacha (Mulberry32 PRNG), 18 species, rarity tiers, shiny variants, Claude-written personality | Gated — BUDDY compile flag |

| DAEMON Mode | Background agent that continues working while user is away | Gated |

| Voice Mode | Push-to-talk via /voice command; rolling out to ~5% of users | Partial rollout |

| Capybara models | New model family including capybara-v2-fast with 1M token context window | Unreleased |

| Opus 4.7 / Sonnet 4.8 | Next-generation model references found in betas.ts | Unreleased |

| afk-mode / advisor-tool | Undisclosed beta API features negotiated internally | Unreleased |

The Buddy System code references April 1–7, 2026 as a teaser window, with full launch gated for May 2026. Each Buddy’s species is deterministically generated from the user’s ID hash with the salt 'friend-2026-401', ensuring consistent assignment without server-side state.

ULTRAPLAN contains a sentinel value __ULTRAPLAN_TELEPORT_LOCAL__ that literally “teleports” a remotely approved planning result back to the local terminal — an architectural pattern that implies Claude Code is evolving toward a hybrid local-cloud execution model.

What Does the Leak Reveal About Claude Code’s System Prompt Architecture?

Answer Capsule: The system prompt is not a single string. It is built from modular, cache-optimized sections at runtime. A SYSTEM_PROMPT_DYNAMIC_BOUNDARY marker separates static, organization-cacheable instructions from dynamic, user-session-specific content. Named functions like DANGEROUS_uncachedSystemPromptSection() indicate deliberate design decisions around API cost optimization — and that someone learned those lessons from production incidents.

flowchart LR

A[System Prompt Builder] --> B[Static Section<br>cacheable across organizations]

A --> C[Dynamic Section<br>user-session specific]

B --> D[SYSTEM_PROMPT_DYNAMIC_BOUNDARY marker]

C --> D

D --> E[Final system prompt sent to API]

E --> F{USER_TYPE?}

F -->|ant - Anthropic employee| G[Undercover Mode injected<br>Do not blow your cover]

F -->|standard user| H[Normal operation]

E --> I[DANGEROUS_uncachedSystemPromptSection<br>volatile content that intentionally breaks cache]Three specific system prompt discoveries stood out:

Undercover Mode — When an Anthropic employee uses Claude Code on a public repository, the system automatically injects instructions preventing the AI from disclosing internal codenames, development decisions, or Anthropic-internal context in commit messages or pull request descriptions. The instruction reads: “You are operating UNDERCOVER… Your commit messages MUST NOT contain ANY Anthropic-internal information. Do not blow your cover.” There is no force-off switch. If the system cannot confirm it is in an internal repo, it stays undercover.

Emotion Telemetry — A file called userPromptKeywords.ts uses regex to flag user prompts containing expressions of frustration (terms like “this sucks,” “so frustrating”). This data does not alter Claude’s real-time response. It is telemetry, piped to Anthropic’s product team to identify UX friction points at scale — before users file formal complaints.

CYBER_RISK_INSTRUCTION — A named section in constants/cyberRiskInstruction.ts draws explicit governance boundaries around authorized security testing versus destructive techniques, maintained by named individuals on a specific internal team.

How Does Claude Code’s Multi-Agent Architecture Actually Work?

Answer Capsule: Claude Code can switch from single-agent to Coordinator Mode, spawning and directing multiple parallel worker agents via XML-based task notifications and a shared scratchpad directory. Its tool system contains 40+ permission-gated modules. A background subagent called autoDream consolidates memory after three conditions: 24 hours elapsed, 5+ sessions completed, and a consolidation lock acquired.

The leaked codebase validated what the Model Context Protocol (MCP) specification implied: Claude Code’s entire tool architecture is a masterclass in composable agent-facing modules.

| Component | Lines of Code | Role |

|---|---|---|

| Base tool definition | 29,000 lines | Schema, permissions, execution logic for all tools |

| Query Engine (QueryEngine.ts) | 46,000 lines | LLM API calls, streaming, caching, chain-of-thought loops |

| Coordinator Mode | coordinator/ directory | Spawns and manages parallel worker agents |

| autoDream | services/autoDream/ | Background memory consolidation subagent |

| Permission system | tools/permissions/ | ML-based YOLO classifier for risk classification |

Coordinator Mode transforms Claude Code from a single agent into an orchestrator:

- Research phase — Workers investigate the codebase in parallel

- Synthesis phase — Coordinator reads findings and crafts implementation specs

- Implementation phase — Workers make targeted changes per spec

- Verification phase — Workers test and confirm changes

Workers communicate via <task-notification> XML messages. The coordinator prompt explicitly instructs: “Do NOT say ‘based on your findings’ — read the actual findings and specify exactly what to do.”

autoDream solves context entropy — the degradation of AI performance in long sessions — with a three-gate trigger: 24 hours elapsed, at least 5 sessions completed, and a consolidation lock acquired. When triggered, it runs four phases: Orient (read memory), Gather Recent Signal (extract new facts), Synthesize (resolve contradictions), and Prune (write compact MEMORY.md).

What Are the Security Implications for Developers?

Answer Capsule: No user data was exposed, but the full transparency of Claude Code’s internal security validation logic, telemetry schemas, path traversal protections, and feature flags creates concrete downstream risks. Readable source makes vulnerability research faster, exposed UX patterns enable higher-quality lookalike malware, and the published internal architecture gives adversaries a roadmap for crafting targeted exploits against the CLI and its dependency chain.

The permission system revealed by the leak is sophisticated — but its transparency cuts both ways:

| Risk Category | Specific Risk | Recommended Action |

|---|---|---|

| Vulnerability research | Readable path traversal protections and validation logic allows faster zero-day discovery | Pin and verify CLI version immediately |

| Malware imitation | Exposed UX flows, logging messages, and install behavior enable higher-quality trojanized Claude Code packages | Increase vigilance for lookalike npm packages |

| Supply chain hygiene | Stray .map files in internal artifact mirrors may expose additional codebases | Audit internal npm mirrors for *.map files |

| Token exposure | High-value API tokens on developer workstations | Tighten egress controls; review local secret handling |

| Feature flag exploitation | Published internal feature flags and environment variables | Audit CI/CD pipelines for undocumented env var access |

Notably, there have already been separate reports — unrelated to this incident — of infostealers disguised as “Claude Code” downloads. The leaked source increases the risk that future lookalikes will be more convincing.

The YOLO permission classifier (ironically named — it denies all risky actions, not approve them) uses ML-based risk classification at LOW/MEDIUM/HIGH levels. Path traversal prevention handles URL-encoded attacks, Unicode normalization, backslash injection, and case-insensitive manipulation. These protections are now fully documented for adversaries.

What Did Anthropic Say, and What Should Teams Do Now?

Answer Capsule: Anthropic classified the incident as “a release packaging issue caused by human error, not a security breach” and confirmed no user data was exposed. Security teams should immediately pin the installed Claude Code CLI version, audit internal npm mirrors for .map files, tighten egress controls on developer workstations, and increase vigilance for lookalike packages — treating the event as a supply chain hygiene incident regardless of Anthropic’s classification.

Anthropic moved to patch the package and stated it is implementing measures to prevent recurrence. However, the leaked material had already been widely mirrored externally. The fundamental characteristic of source code exposure is that it cannot be un-exposed once propagated.

Immediate recommended actions for teams using Claude Code:

- Pin the CLI version — stop floating installs on

latest; capture the installed version and package integrity metadata - Audit internal mirrors — check whether any Claude Code packages in your artifact repository include

*.mapfiles with embeddedsourcesContent - Review token handling — ensure API keys are not logged, cached in world-readable locations, or exposed via shell history

- Increase package vigilance — validate the publisher and integrity hash of any Claude Code update before deployment

- Evaluate egress controls — developer workstations that hold high-value tokens or access sensitive repositories warrant tighter network controls

FAQ

What was leaked in the Claude Code source code incident?

On March 31, 2026, Anthropic accidentally included a 60 MB JavaScript source map file (cli.js.map) in npm package @anthropic-ai/claude-code v2.1.88. The file contained over 512,000 lines of original TypeScript source code across 1,906 files, exposing Claude Code’s full internal architecture, system prompt logic, and unreleased features.

How did the Claude Code source map leak happen?

Claude Code is built with Bun, which generates source map files by default. Engineers failed to add *.map to the .npmignore configuration before publishing. Source map files embed the original source code as strings inside JSON, making the entire codebase accessible to anyone who downloaded or inspected the npm package.

What is KAIROS mode in Claude Code?

KAIROS is an unreleased always-on background daemon found in the leaked source. It proactively monitors developer workflows, logs observations to append-only daily files, and acts autonomously within a 15-second blocking budget. It is gated behind the PROACTIVE compile-time feature flag and is completely absent from all public Claude Code builds.

What is Undercover Mode in Claude Code?

Undercover Mode is injected into the system prompt when Anthropic employees use Claude Code on public repositories. It instructs the AI not to include any Anthropic-internal information in commit messages or pull requests, explicitly stating: “Do not blow your cover.” It activates automatically and has no force-off switch unless the repository remote is on an internal allowlist.

Was user data or model weights exposed?

No. Anthropic confirmed that no user data, customer prompts, conversation history, or model weights were exposed. The incident was limited to client-side CLI source code. However, the exposure of internal security validation logic creates downstream risks for organizations using the tool.

What is the Capybara model family?

The leaked source references an unreleased Claude model family codenamed Capybara, including a capybara-v2-fast variant with a 1-million token context window in fast and thinking tiers. The codebase also references Opus 4.7 and Sonnet 4.8, which have not been publicly announced.

What should organizations do after the Claude Code leak?

Pin the CLI version, audit internal npm mirrors for stray .map files, review local API token handling practices, increase vigilance for lookalike packages, and tighten egress controls on developer workstations. Treat the event as a supply chain hygiene incident requiring verification — not just an IP exposure affecting only Anthropic.

Further Reading

- npm Package: @anthropic-ai/claude-code — Official npm registry page for the Claude Code CLI

- npm package.json — files field and .npmignore — Official npm documentation on the

filesarray and.npmignorefor controlling which files are included in published packages - Bun Bundler — Standalone Executables and Source Maps — Bun’s bundler documentation covering the

--sourcemapflag and how to configure or disable source map output for production builds - Model Context Protocol (MCP) Specification — The open standard that Claude Code’s tool architecture validates and extends