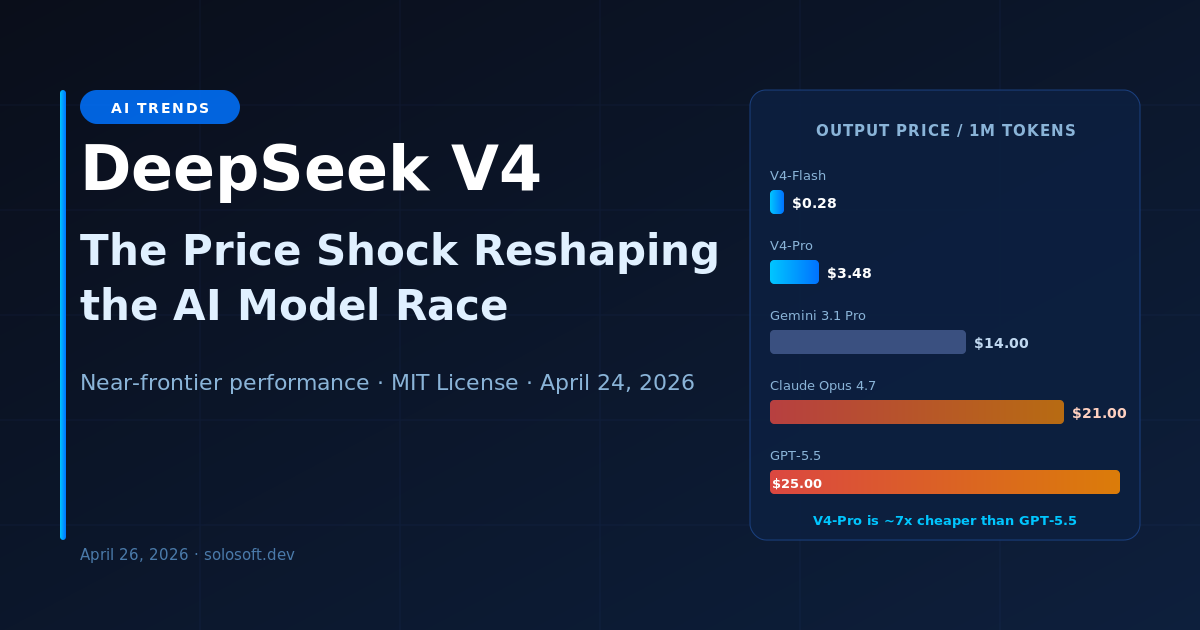

On April 24, 2026, DeepSeek released two new models — V4-Pro and V4-Flash — that immediately rattled the pricing assumptions underlying every enterprise AI budget. The V4-Pro’s output cost of $3.48 per million tokens sits at roughly one-seventh of GPT-5.5’s price and one-sixth of Claude Opus 4.7’s. For teams running at any scale — production coding assistants, RAG pipelines, customer service automation — the math is hard to ignore. This is not a modest incremental release. It is the latest entry in what is becoming a structural pricing war, one where China’s AI labs are using capital-efficient architectures and lower operating costs to compress the cost-per-intelligence unit faster than the market can absorb.

The story is bigger than price. DeepSeek V4-Pro scores 80.6% on SWE-bench Verified, just 0.2 points behind Claude Opus 4.6 — the current top-ranked US model. On coding benchmarks that measure real production performance, such as LiveCodeBench and Terminal-Bench 2.0, V4-Pro actually leads all frontier models outright. This is a model that is not quite at the absolute frontier of reasoning, but is close enough, and cheap enough, that the ROI calculus for many enterprise use cases has fundamentally shifted. The release also signals something strategically important: DeepSeek has deepened its integration with Huawei’s Ascend chips, a direct response to US export controls on Nvidia hardware. What began as a constraint has evolved into a supply chain strategy. The AI race in 2026 is being fought simultaneously on benchmarks, pricing, and chip independence.

What Is DeepSeek V4 and Why Does It Matter?

DeepSeek V4 is a family of two models — V4-Pro and V4-Flash — both built on Mixture-of-Experts architecture and released under the MIT License on April 24, 2026. V4-Pro targets maximum performance with 1.6 trillion total parameters (49B active per token), while V4-Flash targets efficiency with 284 billion total parameters (13B active). Both were trained on 32–33 trillion tokens and feature a 1 million-token context window enabled by Hybrid Attention Architecture.

What matters is the combination: near-frontier performance, open weights, MIT licensing, and a price point that undercuts every US competitor by a factor of six to ten. For the enterprise market, the question is no longer “can we afford frontier AI?” but “why are we paying frontier prices for tasks where V4-Pro is close enough?”

How Does DeepSeek V4 Compare to OpenAI and Anthropic?

DeepSeek V4-Pro delivers performance within 3 to 6 months of the absolute frontier across most benchmarks, at one-seventh the API cost. On coding-specific tasks it leads all comers outright — a meaningful advantage for the software teams that represent the majority of enterprise LLM spend.

| Model | Provider | Total Params | Active Params | Context Window | SWE-bench Verified | Output Price / 1M tokens |

|---|---|---|---|---|---|---|

| DeepSeek V4-Pro | DeepSeek | 1.6T | 49B | 1M tokens | 80.6% | $3.48 |

| DeepSeek V4-Flash | DeepSeek | 284B | 13B | 1M tokens | ~65% | $0.28 |

| Claude Opus 4.7 | Anthropic | Undisclosed | Undisclosed | 200K tokens | ~82% | ~$21.00 |

| GPT-5.5 | OpenAI | Undisclosed | Undisclosed | 128K tokens | ~81% | ~$25.00 |

| Gemini 3.1 Pro | Undisclosed | Undisclosed | 2M tokens | ~79% | ~$14.00 |

The table reveals the core tension: if SWE-bench Verified performance is within 1 to 2 points across all models, the 6x to 10x price gap becomes the dominant decision variable for most production workloads.

What Makes V4-Pro’s Architecture So Efficient?

DeepSeek V4 uses a Mixture-of-Experts (MoE) architecture — a design that activates only a small fraction of total model parameters for each token. Instead of routing every input through all 1.6 trillion parameters, V4-Pro activates just 49 billion per token. This reduces compute costs dramatically without sacrificing the capacity that comes from a large parameter count.

The MoE router learns during training which expert sub-networks handle which types of tasks best. Coding tokens go to code-specialist experts. Reasoning tokens go to logic-specialist experts. The result is a model with the knowledge surface of a 1.6T parameter model at the inference cost of a 49B model.

flowchart TD

Input[Input Token Stream] --> Router[MoE Gating Router<br>Learned per Token Type]

Router --> E1[Code Expert Cluster]

Router --> E2[Reasoning Expert Cluster]

Router --> E3[Language Expert Cluster]

Router --> EN[Domain Expert N]

E1 --> Agg[Weighted Output Aggregation]

E2 --> Agg

E3 --> Agg

EN --> Agg

Agg --> Out[Final Model Response]Paired with the Hybrid Attention Architecture, which extends effective memory across 1 million tokens, V4-Pro achieves what most enterprise users actually need: large context, strong coding, and reasonable reasoning — at inference cost that scales with the active parameter count, not the total.

What Does DeepSeek V4 Cost and How Does It Compare?

The pricing gap between DeepSeek V4 and US frontier models is not a rounding error — it is a structural difference in cost basis that compounds at scale.

| Model | Input Price / 1M tokens | Output Price / 1M tokens | Cached Input Price / 1M tokens | License |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.07 | $0.28 | $0.018 | MIT |

| DeepSeek V4-Pro | $1.74 | $3.48 | $0.43 | MIT |

| Gemini 3.1 Pro | ~$5.00 | ~$14.00 | ~$1.25 | Proprietary |

| Claude Opus 4.7 | ~$7.00 | ~$21.00 | ~$1.75 | Proprietary |

| GPT-5.5 | ~$10.00 | ~$25.00 | ~$2.50 | Proprietary |

At 10 billion output tokens per month — a realistic volume for a mid-sized enterprise with active coding and customer-facing AI tools — the monthly cost difference between V4-Pro and GPT-5.5 is approximately $215 million versus $250 million. Scale that to a year and the saving exceeds the cost of deploying an internal AI team. For startups and early-stage companies, the comparison is even more stark: V4-Flash at $0.28 per million output tokens makes LLM capabilities effectively zero-cost at small scale.

Which Benchmarks Does DeepSeek V4-Pro Actually Win?

DeepSeek V4-Pro leads the field on coding benchmarks while sitting slightly behind the absolute frontier on broad reasoning and knowledge tasks. This is a deliberate optimization: the workloads where enterprises spend the most compute are also the workloads where V4-Pro performs best.

| Benchmark | DeepSeek V4-Pro | Claude Opus 4.6 | GPT-5.5 | Gemini 3.1 Pro | What It Measures |

|---|---|---|---|---|---|

| SWE-bench Verified | 80.6% | 80.8% | ~81.0% | ~79.0% | Real GitHub issue resolution |

| LiveCodeBench | 93.5% | 88.8% | ~90.0% | ~88.5% | Competitive coding problems |

| Terminal-Bench 2.0 | 67.9% | 65.4% | ~64.0% | ~63.0% | Autonomous CLI task completion |

| Codeforces Rating | 3206 | ~3150 | ~3100 | ~3050 | Competitive programming rank |

| MMLU Pro | ~76% | ~80% | ~81% | ~80% | Graduate-level knowledge |

| Humanity’s Last Exam | ~44% | ~52% | ~54% | ~51% | PhD-level reasoning |

The pattern is clear: V4-Pro wins on applied coding and loses on general reasoning. For the majority of enterprise LLM workloads — code generation, PR review, documentation, agentic software tasks — this trade-off is highly favorable.

Why Does DeepSeek’s Huawei Integration Signal More Than Just a Chip Deal?

DeepSeek V4’s close integration with Huawei Ascend chips is not a backup plan — it is a strategic declaration that China’s AI stack can function at frontier scale without Nvidia hardware. After US export controls cut off advanced Nvidia GPU access, DeepSeek did not slow down. It optimized.

flowchart LR

V1[DeepSeek V1<br>2023<br>First Open Model] --> V2[DeepSeek V2<br>2024<br>MoE Architecture]

V2 --> R1[DeepSeek R1<br>Jan 2025<br>Reasoning Shock]

R1 --> V3[DeepSeek V3<br>Dec 2024<br>Frontier Benchmark Claims]

V3 --> V4[DeepSeek V4-Pro<br>Apr 2026<br>Price and Chip Independence]The Ascend integration has three strategic implications. First, it reduces DeepSeek’s exposure to future US hardware sanctions. Second, it creates a vertically integrated Chinese AI stack from chip to model that does not depend on the US supply chain at any layer. Third, it demonstrates that Huawei’s Ascend chips — previously dismissed as second-tier — can train and serve models at frontier-adjacent scale when the software stack is optimized for them. This is the AI chip equivalent of Apple Silicon: a bet that vertical integration beats commodity hardware.

For US policymakers and AI labs, the implication is uncomfortable: export controls on Nvidia chips have not stopped China from advancing at pace. They have accelerated China’s investment in domestic chip alternatives.

How Should Enterprises Respond to This Pricing Disruption?

The rational response to DeepSeek V4’s release is a systematic workload audit, not a wholesale migration. Enterprises should map their current LLM spend by task type, evaluate benchmark parity for each use case, and calculate the actual cost-saving opportunity before making infrastructure changes.

The right move depends on three factors: task sensitivity (does this workload require frontier-level reasoning or just frontier-adjacent?), data governance requirements (where do inputs and outputs need to reside?), and migration complexity (how deeply is the current stack coupled to a specific vendor’s APIs and tooling?).

For coding, document processing, summarization, and RAG workloads, the case for a partial or full migration to V4-Pro is strong. For complex multi-step reasoning, sensitive regulated data, or workloads tightly coupled to OpenAI or Anthropic’s ecosystem, the calculus is less clear-cut. The enterprises that will extract the most value from this pricing shift are those that treat LLM selection as an ongoing portfolio decision — not a one-time architectural choice.

The deeper strategic implication is this: DeepSeek V4 is the second major Chinese model release in 15 months (after R1 in January 2025) that has forced the US AI industry to reconsider its pricing assumptions. If this pace continues, the cost of frontier-adjacent intelligence will approach zero for most workloads within two to three years. Companies building businesses on AI should be designing for that world — and the defensible moat is not access to cheap tokens, but proprietary data, user trust, and workflow depth that no model swap can replicate.

FAQ

What is DeepSeek V4 and when was it released? DeepSeek V4 is a family of large language models released on April 24, 2026 by Chinese AI lab DeepSeek. It includes V4-Pro (1.6 trillion total parameters, 49B active) and V4-Flash (284B total, 13B active). Both use Mixture-of-Experts architecture and the MIT License, making them freely usable and modifiable for commercial applications.

How does DeepSeek V4-Pro pricing compare to GPT-5.5 and Claude Opus 4.7? DeepSeek V4-Pro costs $1.74 per million input tokens and $3.48 per million output tokens — roughly one-seventh the price of GPT-5.5 and one-sixth the price of Claude Opus 4.7. With cached input the gap widens to one-tenth, making V4-Pro dramatically cheaper for high-volume production workloads.

Which coding benchmarks does DeepSeek V4-Pro lead on? DeepSeek V4-Pro leads all frontier models on Terminal-Bench 2.0 (67.9%), LiveCodeBench (93.5%), and Codeforces rating (3206). On SWE-bench Verified it reaches 80.6%, just 0.2 points behind the current leader. It trails the frontier on broad reasoning benchmarks by approximately 3 to 6 months.

Is DeepSeek V4 open source? Yes. Both V4-Pro and V4-Flash are released under the MIT License — the most permissive major open-source license. Developers can download weights, fine-tune, and deploy commercially without per-token API fees.

What is the Hybrid Attention Architecture in DeepSeek V4? Hybrid Attention Architecture enables a 1 million-token context window in DeepSeek V4, letting users submit entire codebases or book-length documents in a single prompt. It competes directly with Gemini 3.1 Pro and Claude Opus 4.7 in long-context enterprise scenarios.

Should enterprises switch from GPT-5 or Claude to DeepSeek V4? It depends on the use case. Coding and document workloads are strong migration candidates. Highly regulated industries should first evaluate data residency, export controls, and supply chain security. The pragmatic approach is a workload-level audit, not a wholesale migration.