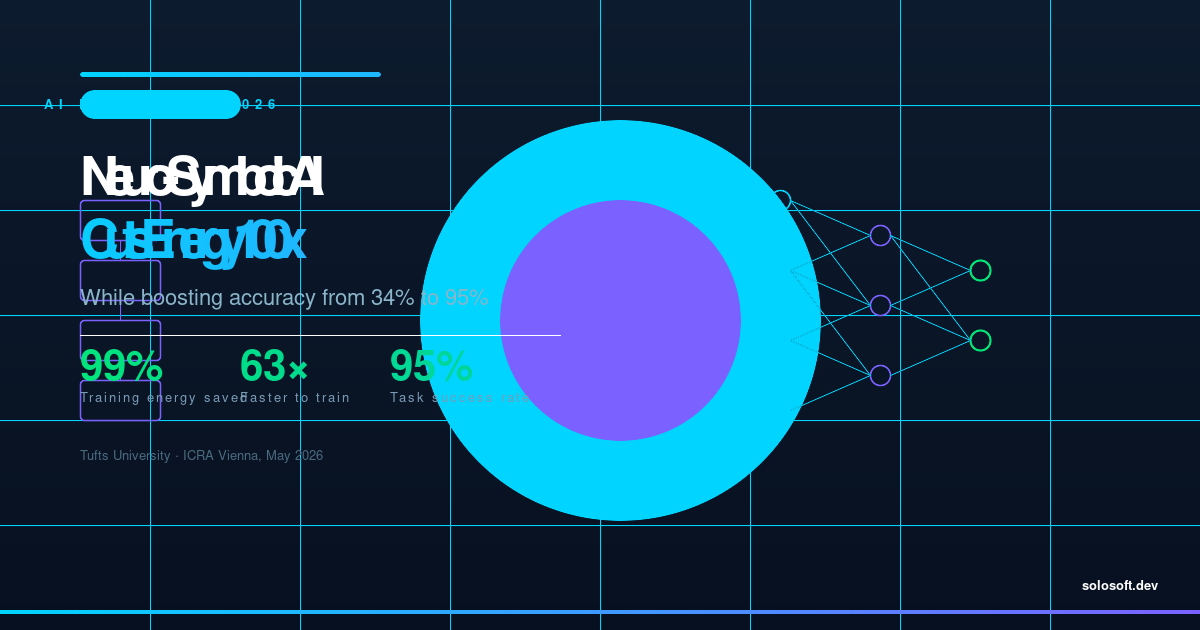

The AI industry has spent the past five years scaling its way to smarter models — adding parameters, burning more compute, and consuming electricity at a rate that has alarmed power-grid operators from Virginia to Singapore. In April 2026, a research team at Tufts University delivered a result that challenges the core assumption behind that strategy: bigger does not have to mean more expensive. Their neuro-symbolic vision-language-action model completed a demanding planning task with a 95 percent success rate using just one percent of the energy required by standard deep-learning models during training and five percent during operation. Training time collapsed from more than 36 hours to 34 minutes. The finding — to be presented at the International Conference on Robotics and Automation in Vienna in May 2026 — arrives at a moment when the AI energy crisis has moved from theoretical concern to operational emergency. Hyperscalers are signing decade-long nuclear power purchase agreements, and data-center electricity demand is projected to triple by 2030 even under conservative AI adoption scenarios. A technique that achieves better accuracy at one percent of the training energy is not merely an academic curiosity — it is a direct challenge to the capital economics of every frontier lab and every enterprise deploying AI at scale.

What Is Neuro-Symbolic AI, and Why Does It Matter Now?

Neuro-symbolic AI fuses two historically separate research traditions: neural networks, which learn statistical patterns from raw data, and symbolic reasoning, which encodes structured rules and logical relationships the way humans organize knowledge. Standard deep-learning models treat every problem — including sequential planning tasks — as a pattern-matching challenge, requiring enormous amounts of training data and compute to approximate correct behavior. Neuro-symbolic systems break problems into logical sub-steps first, letting the symbolic engine handle structure while the neural component handles perception. The result is a model that reasons rather than guesses, and reasoning is computationally cheap compared to brute-force gradient descent over billions of parameters.

The timing matters because 2026 is the year agentic AI — systems that autonomously plan, decide, and execute multi-step workflows — became the enterprise standard expectation. Every major model release this quarter emphasized agentic capabilities. Agentic tasks are exactly where symbolic reasoning shines: they require reliable sequential decision-making, not just fluent text generation. Neuro-symbolic architectures may therefore be better aligned with the actual workloads enterprises want to deploy than the pure transformer approach.

How Does the Tufts Breakthrough Actually Work?

The Tufts team, led by Matthias Scheutz, Karol Family Applied Technology Professor, built their model around robotic manipulation tasks — specifically the Tower of Hanoi, a classic planning problem that requires moving discs between pegs without ever placing a larger disc on a smaller one. The task demands correct sequential reasoning over many steps; random statistical approximation fails badly.

Their architecture pairs a neural perception module — which identifies objects and infers spatial relationships from visual input — with a symbolic planner that generates an explicit action sequence using classical AI planning algorithms. The neural module does not need to learn planning from scratch; it only needs to perceive accurately enough to feed the symbolic planner correct inputs. That division of labor eliminates the vast majority of training examples that a pure neural model would need to internalize planning logic through trial and error.

| Metric | Standard VLA Model | Neuro-Symbolic Model |

|---|---|---|

| Task success rate | 34% | 95% |

| Training time | 36+ hours | 34 minutes |

| Training energy (relative) | 100% | 1% |

| Inference energy (relative) | 100% | 5% |

| Architecture type | End-to-end neural | Neural + symbolic planner |

The 95 vs 34 percent success gap is not noise — it reflects a qualitative difference in how the two architectures approach planning. The standard model sometimes got lucky with short sequences; the neuro-symbolic model solved the full puzzle reliably because its symbolic planner guaranteed structural correctness, while the neural component handled the messy real-world perception problem.

Why Is AI Energy Consumption a First-Order Problem in 2026?

AI energy demand has escalated from a talking point into a physical infrastructure constraint. Training GPT-4-class models consumed roughly 50 GWh. Estimates for training the next generation of trillion-parameter models run to 1,000 GWh or more — comparable to the annual electricity consumption of a mid-sized city. Inference is the bigger long-term concern: as enterprises deploy AI into production at scale, the inference load dwarfs training energy by an order of magnitude.

| Infrastructure Investment | Organization | Scale |

|---|---|---|

| Nuclear PPAs signed 2025–2026 | Microsoft, Google, Amazon | Multiple 1–3 GW plants |

| New data center capacity 2026 | US alone | ~30 GW projected |

| AI share of global electricity | IEA projection 2030 | 3–4% of total consumption |

| Average training run (frontier LLM) | Top-3 lab | 50–200 GWh |

The economic pressure is as significant as the environmental one. GPU compute is the single largest cost item for AI labs and enterprise deployments alike. A model that achieves better accuracy at 1 percent of the training energy represents a potential 99-fold reduction in the compute bill for the training phase alone — a number that changes the business case for deploying AI in resource-constrained environments, from industrial robots to edge devices in emerging markets.

Does This Challenge the Scaling Laws That Have Driven the Industry?

Yes — partially and importantly. The scaling laws established by Kaplan et al. and subsequently refined by Chinchilla describe how model performance improves as parameters and training data increase in tandem. They have been the north star of frontier AI investment since 2020: more compute means better models, full stop.

The neuro-symbolic result does not disprove scaling laws for general-purpose language models. What it demonstrates is that for structured, action-oriented tasks — a large and growing subset of enterprise AI workloads — the assumption that you must scale neural components to achieve high accuracy is wrong. A smaller neural component paired with a symbolic reasoner can outperform a much larger pure-neural system on tasks that have logical structure.

graph TD

A[Perception Input<br>Camera / Sensors] --> B[Neural Perception Module<br>Object Detection + Spatial Mapping]

B --> C[Symbolic Planner<br>Classical AI Planning Algorithm]

C --> D[Action Sequence<br>Structured Step-by-Step Plan]

D --> E[Execution Layer<br>Robot / Agent / Workflow Engine]

E --> F[Task Completion<br>95% Success Rate]

G[Standard Deep Learning<br>Pure Neural End-to-End] --> H[Statistical Approximation<br>34% Success Rate]

style A fill:#e8f4f8

style F fill:#d4edda

style H fill:#f8d7daThe strategic implication is that the industry will bifurcate: frontier labs will continue scaling transformers for open-ended reasoning, while robotics, automation, and edge AI vendors will increasingly adopt hybrid architectures that are accurate, efficient, and explainable. Enterprises evaluating AI vendors in 2026 should ask explicitly which paradigm their vendor uses and whether it matches the structure of the actual task.

What Are the Implications for Enterprise AI Deployment?

The enterprise AI landscape in April 2026 is consolidating rapidly. Despite record Q1 venture funding — $300 billion globally, with four frontier labs alone capturing $188 billion — enterprises are rationalizing to one or two AI vendors per use case. The primary driver is integration cost: deploying AI into production workflows is expensive, and switching costs compound quickly.

The neuro-symbolic result introduces a new dimension to vendor evaluation. Efficiency-first architectures reduce ongoing inference costs, make edge deployment practical, and produce models whose decisions can be audited step by step — a compliance advantage that pure-neural black boxes cannot easily match.

flowchart LR

subgraph Traditional Path

T1[Large LLM] --> T2[High Compute Cost]

T2 --> T3[Cloud Dependency]

T3 --> T4[Black-Box Decisions]

end

subgraph Neuro-Symbolic Path

N1[Hybrid Model] --> N2[1-5 pct Energy]

N2 --> N3[Edge Deployment Viable]

N3 --> N4[Interpretable Steps]

end

T4 --> C[Enterprise Adoption Friction]

N4 --> D[Enterprise Adoption Advantage]

style D fill:#d4edda

style C fill:#f8d7da| Enterprise Requirement | Pure-Neural LLM | Neuro-Symbolic Hybrid |

|---|---|---|

| Explainability / audit trail | Low — statistical outputs | High — symbolic steps are logged |

| Edge / on-premise deployment | Constrained by model size | Practical at low energy cost |

| Regulatory compliance | Requires post-hoc interpretability tools | Built-in logical trace |

| Task accuracy on planning problems | Moderate — scales with parameters | High — logical correctness guaranteed |

| Ongoing inference cost | High at production scale | Potentially 20x lower |

For CIOs and AI product leaders, the practical question is whether their highest-value AI use cases involve structured, multi-step reasoning (candidate for neuro-symbolic) or open-ended generation and comprehension (where large neural models remain the right tool). Most enterprise workflows — supply chain optimization, clinical pathway planning, robotic process automation, code generation for bounded domains — fall squarely in the neuro-symbolic sweet spot.

FAQ

What is neuro-symbolic AI and how does it differ from standard deep learning? Neuro-symbolic AI fuses neural networks — which excel at pattern recognition across raw data — with symbolic reasoning, which encodes explicit rules and categories the way humans organize knowledge. Standard deep learning treats every problem as a statistical pattern-matching challenge. Neuro-symbolic AI breaks problems into logical sub-steps first, dramatically reducing the volume of data and computation required.

How much energy does the new neuro-symbolic AI model actually use compared with standard models? During training, the Tufts neuro-symbolic model used only 1 percent of the energy consumed by standard vision-language-action models — a 99 percent reduction. During operation it used 5 percent, a 95 percent reduction. Training time fell from more than 36 hours to 34 minutes, which translates directly to lower cloud compute costs and a smaller carbon footprint for production deployments.

Was accuracy sacrificed to achieve these energy savings? No — accuracy improved dramatically. On the Tower of Hanoi planning benchmark, the neuro-symbolic model achieved a 95 percent success rate versus only 34 percent for the standard baseline. The combination of symbolic reasoning with neural perception means the model plans correctly rather than guessing statistically, producing higher accuracy at a fraction of the compute budget.

What types of AI applications benefit most from neuro-symbolic approaches? Applications that require multi-step planning, interpretable decisions, or operating under strict resource constraints benefit most. Early wins are in robotics and autonomous systems, but the architecture applies equally to industrial automation, healthcare diagnostics, edge AI deployment, and any enterprise workflow where energy cost and explainability are first-class requirements.

Does this breakthrough mean the industry will stop scaling large language models? No, but it signals a fork in the road. Frontier labs will continue scaling LLMs for general reasoning tasks where parameter count matters. For domain-specific, action-oriented AI — robots, agents that execute workflows, embedded systems — neuro-symbolic architectures offer superior efficiency and accuracy. The two paradigms will coexist, with hybrid systems emerging for demanding enterprise use cases.

When will neuro-symbolic AI reach production deployments? The Tufts research is being presented at the International Conference on Robotics and Automation in Vienna in May 2026. Industrial robotics vendors typically translate conference-stage research into production within 18 to 36 months, but enterprise software platforms following agentic AI trends may incorporate efficiency-focused architectures even faster.

無程式碼也能輕鬆打造專業LINE官方帳號!一鍵導入模板,讓AI助你行銷加分!

無程式碼也能輕鬆打造專業LINE官方帳號!一鍵導入模板,讓AI助你行銷加分!